Get Me Out

Trailer

A co-op puzzle game in which communication is key to your escape.

Interaction System

A great escape room needs to make the mundane, like searching through drawers and scanning through documents, feel like an exciting expedition. With the interact system at the heart of Get Me Out's experience, I needed to focus on harvesting tactility and reactivity to the brink. Whilst snappy animation-based buttons and keypads were used, by and large I am most proud of the physics-based hold and grab mechanics.

Holding

Initial implementations of the hold system involved setting the held object as a child protruded in front of the first person camera. Whilst the approach was a good starting point, it felt rigid. Crucially, objects lacked momentum and reacted poorly to their surroundings.

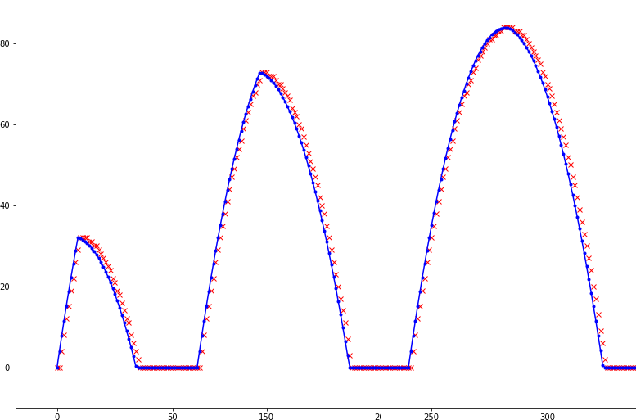

Thankfully, I have a trick that'll put a spring in any object's step — Oscillators! As before, I used an empty child protruded in front of the first person camera, although this time to denote the target transform for held objects. I was then able to turn the hold behaviour on or off by simply enabling or disabling the oscillator component. From this simple approach, held objects gain physics-based easing and the ability to be displaced by the environment, whilst also conserving linear momentum — allowing for objects to be thrown at no extra cost. Adding additional features to the hold system then comes naturally. For instance, the ability to control the distance at which an object is held is then just a matter of manipulating the target transform's local forward position.

All that's then missing is that held objects won't rotate with the player based on the look direction. This is remedied by doing all the above with the addition of a torsional oscillator component. Adding additional features once again comes naturally; the ability to rotate held objects for inspection is just a matter of manipulating the target transform's local rotation.

Hold Interaction

Player Hold

Player Hold Distance

Player Hold Rotation

Grabbing

I distinguished grabbing from holding, as grabbed objects have unique limitations to their motion. For example, we grab desk drawers, which move restricted to a single fixed axis of motion. Although, nor are all grabbed objects equal; cupboard doors rotate along a single axis determined by their hinges, whilst remaining fixed in position. Due to the significant difference in these behaviours, I further subdivided grabbing into it's linear and torsional forms.

Whilst I was once again able to use oscillators for their responsive physics-based benefits, I soon encountered a new problem at hand. Namely, how could I accurately interpret how the player wants to move or rotate a grabbed object based on mouse input alone? Favouring tactility over the mundane, I decided to try my hand at deriving a look vector based approach.

For the linear case, the problem becomes one of interpreting which point along the fixed axis of motion best represents where the player's looking. After playing around with some ways of using colliders to assist in solving the problem, I landed on more eloquent analytical solutions with better results. The initial analytical solution came from laying a mathematical plane along the fixed axis of motion and finding the point of intersection of the camera's look direction and the plane. The results were much more predictable than with colliders, but still performed poorly in some cases. For instance, whilst a single vertical plane works great from a 'side on' viewpoint, it's a very poor analogue from a 'top down' viewpoint. Introducing multiple planes and sorting them by greatest dot product can help remedy this issue. From a desire for continuous transitions between planes and a maximised dot product, I continued thinking of ways to optimise the solution. I found that substituting the multiple planes for a single plane which is dynamically oriented perpendicular to the camera's look direction worked well. Interpretive problems like this are tricky; too many layers of substitution from the core problem can give poor results. Whilst this analytical solution has the best results of anything I've tried, it isn't flawless. Due to the dynamic plane having a fixed position, the intersection is a poor interpretation when the player is looking away from the origin. Thankfully, that case is typically encountered at larger displacements, which wasn't a requirement for Get Me Out. I'd be interested in knowing how others have approached this problem!

The torsional case went through a similar development, but with its own unique problems. My final approach uses a combination of both computational and analytical techniques to interpret the angle around the rotation axis which best represents where the player's looking. First, I center a static sphere collider at the axis of rotation and give it a radius that matches that of the mesh. By raycasting along the look vector, the computed intersection of the look vector and the surface of the sphere can be found. The point of intersection can then be converted to an angle around the axis of rotation. In the case that the look vector doesn't intersect the sphere, it is most important that the converted angle is continuous with the angle on the sphere. I found the analytical intersection of the look vector and a mathematical plane (as finalised with linear grabbing) to perform well in this regard.

Grab Interaction

Linear Grab Interaction

Torsional Grab Interaction

Player Grab

Player Linear Grab

Player Torsional Grab

Heads-Up Display

As pressing the wrong button could mean the difference between life and death, it must be starkly clear to the player what interactions are available at any given time. Outlines worked well to purvey which object would be interacted with, but didn't help illustrate the action at hand. We therefore introduced a dynamic reticle; one that switches to a gestured hand based on the Interactable Type.

Interactable Type

Reticle

Due to the unconventional nature of the interaction system, some players faced initial difficulty with the more complex interactables, such as valves. We therefore introduced control prompts, which briefly illustrate how to perform an interaction. An interactable such as a valve, may have a different control prompt before being grabbed and whilst being grabbed. Each Interactable Mode therefore has its own control prompt, which is displayed as appropriate. All the interactable specific HUD properties are compiled and stored in Interactable Settings.

Interactable Mode

Prompt Info

Prompt

Interactable Settings

HUD Controller

After many play tests, we found players would often ignore the control prompts — even when they are struggling to pick up a mechanic! This was surely not helped by the fact that every interactable in the game displays a prompt. Given more iterations, we would have tutorialised the mechanics and reduced the frequency of prompts to only when they're necessary; such as on the first encounter with a new mechanic.

Character Controller

I am particularly happy with the feel of the crouch and the jump, which came about as a result of a malleable curve-based system.

Falling, and Jumping

With an aim of giving designers complete control over the player's jump trajectory, I opted to explore the path of curve-based (rather than numerical-based) parameters. This extended to controlling how a player would fall when walking off a ledge, and that's where the discussion will begin.

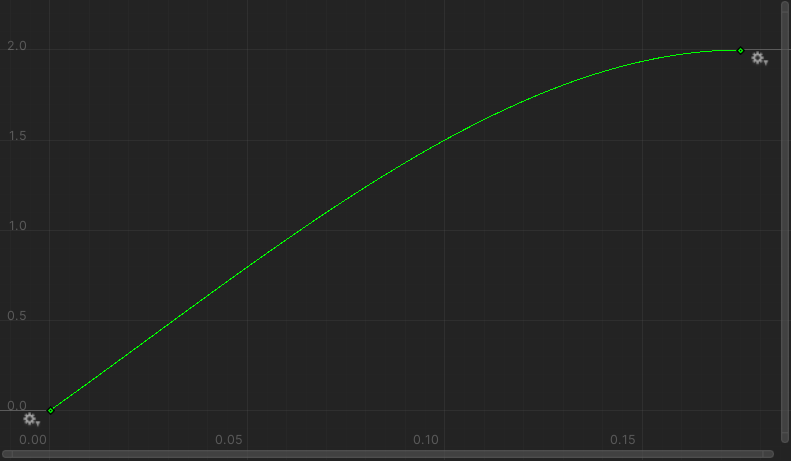

Quite simply, the only parameter required for falling is the fall curve — a graph of height against time. Using the curve to fall is then just a matter of sampling the curve at each update and setting the relative height of the player appropriately. Given that curves made in Unity's editor can occur over a finite interval, the gradient at the end of the fall curve is sampled and repeated after the curve is complete.

|

|

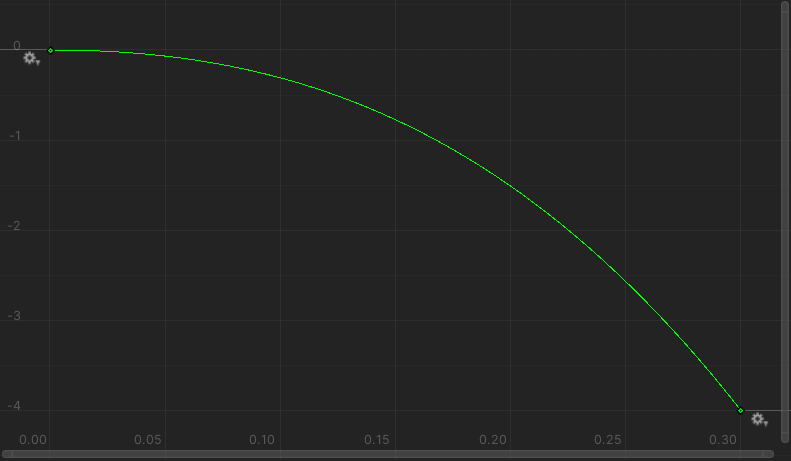

Jumping requires a jump curve, and a similar procedure is adopted. In order to give the player more freedom over jump height, dynamic jump durations are supported. Because the height of a jump cannot be predicted before the jump begins, shortened jumps will cause the trajectory to be discontinuous; this is because the prematurely dismissed rise curve is unable to finish flattening out before starting the fall curve. Such a jump is found in Metroid! For Get Me Out, this tradeoff was also acceptable, but a platformer would likely need to further develop this approach.

|

If continuity is the aim, then another way to tackle the problem of a dynamic rise trajectory is to implement how the fall curve works but in reverse. For instance, the initial gradient of the rise curve can be followed until the jump button is released or the maximum jump duration is exceeded, at which point the remainder of the rise curve is followed. That's the approach we ended up using on Get Me Out, and it's performed quite well for our needs. A final piece of flavour I like to add to dynamic height jumps is a minimum jump duration. This prevents teeny-tiny jumps; ensuring that even the briefest of taps results in a satisfying hop.

Player Fall

Player Jump

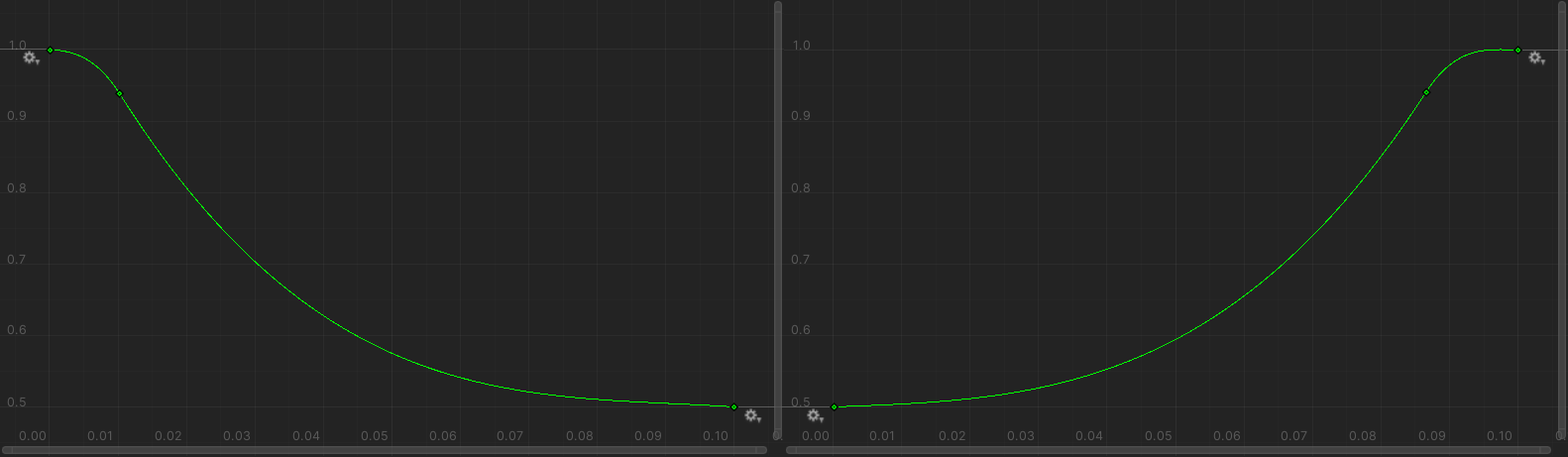

Crouching

Crouching was implemented similarly to falling and jumping, with the addition of crouch curves also being used to control the height of the player's capsule collider. This allowed for the player to fit snug underneath pipes and the like. I was pleased to find crouch jumping worked nicely out of the box, hurrah!

|